It was 11:47 PM when Daniel finally read the email he’d been dreading for three weeks. His MVP app developers—the ones who’d promised him a “fully functional product in 90 days”—were asking for another $45,000. And the app still didn’t work.

Six months earlier, Daniel had been exactly where you might be right now: excited about his idea, terrified of choosing the wrong development team, and overwhelmed by the sheer number of MVP app developers claiming they could turn his vision into reality.

He’d done his homework. He’d compared pricing. He’d checked portfolios. He’d even asked for references. And yet, here he was at midnight, staring at a half-built product that had already consumed $127,000 of his $200,000 seed round, MVP app developers with nothing to show investors except excuses and missed deadlines.

“The worst part wasn’t the money,” Daniel told me over coffee two months later, after he’d started over with a different team. “It was realizing I’d wasted six months. My competitor launched three weeks ago. They used the same tech stack I’d planned, executed my exact positioning strategy, MVP app developers and they’re already at 4,000 users. I should have been first to market. Instead, I’m scrambling to catch up because I chose the wrong MVP app developers.”

Daniel’s story isn’t unique. According to research on startup failures, 42% of startups fail because they build products nobody wants—but an almost equal number fail because they choose development partners who can’t execute their vision efficiently. The difference between these scenarios? One is a market problem. The other is a partnership problem that’s entirely preventable.

This is your guide to choosing MVP app developers who will actually help you succeed, written by someone who’s seen both sides of this equation and learned these lessons through painful, expensive experience.

What Makes MVP App Developers Different (And Why Most Founders Get This Wrong)

Here’s the uncomfortable truth that most development agencies won’t tell you: building an MVP requires a fundamentally different skillset than building enterprise software or consumer applications.

When you hire MVP app developers, you’re not just hiring people who can write code. You’re hiring strategic partners who understand the unique pressures, constraints, and objectives of early-stage startups. And that’s where most founders make their first critical mistake.

Traditional app developers are optimized for different outcomes. They excel at building robust, scalable, feature-complete applications for established companies with clear requirements and substantial budgets. They focus on code quality, comprehensive testing, extensive documentation, and long-term maintainability.

MVP app developers operate under completely different constraints. They’re optimized for speed, learning, and capital efficiency. They know how to identify your riskiest assumptions, build only what’s necessary to test those assumptions, and help you make data-driven decisions about what to build next.

Jessica learned this distinction the hard way. She hired a prestigious development agency with an impressive portfolio of Fortune 500 clients. “They were amazing developers,” she explained. “Every line of code was beautifully written. Their architecture was incredibly sophisticated. The problem? They spent three months building a user authentication system with enterprise-grade security features when I needed to validate whether anyone would even sign up for my platform.”

The agency wasn’t incompetent—they were just optimized for the wrong outcome. They gave Jessica exactly what she asked for: professional, scalable code. But what she actually needed was MVP app developers who would challenge her assumptions, help her identify the minimum features necessary to test her hypothesis, and get her to market in weeks, not months.

The Five Questions That Reveal Whether MVP App Developers Actually Understand Startups

After working with dozens of founders who’ve hired (and fired) multiple development teams, I’ve identified five questions that consistently separate MVP app developers who truly understand the startup game from those who are just claiming they do.

Ask these questions in your initial conversations. Pay attention not just to what they say, but to how they react to the questions themselves.

Question 1: “How do you decide what features to include in an MVP?”

Weak answer: “We build whatever you tell us to build. Just give us your requirements document and we’ll provide a quote.”

This response reveals order-takers, not strategic partners. True MVP app developers should push back on your feature list, interrogate your assumptions, and help you identify the absolute minimum needed to test your core hypothesis.

Strong answer: “We start by understanding your riskiest assumption—the thing that, if wrong, would invalidate your entire business model. Then we design the leanest possible experiment to test that assumption. Often, the right MVP doesn’t include half the features founders initially think they need.”

Marcus, who built a successful fintech app, told me about this exact conversation with the team that eventually became his MVP app developers: “When I showed them my 47-feature specification document, they didn’t give me a quote. They scheduled a three-hour working session to understand my business model, identify my biggest risks, and help me descope to 8 core features. I was frustrated at first—I wanted them to just build what I asked for. Looking back, that consultation saved me six months and probably $200,000.”

Question 2: “What happens when we discover our initial assumptions were wrong?”

Weak answer: “We can discuss change orders and provide revised quotes based on the new requirements.”

This approach treats pivots as expensive disruptions rather than expected learning opportunities. It reveals developers who are optimized for fixed-scope contracts, not iterative discovery.

Strong answer: “That’s exactly what MVPs are for—learning what’s actually needed. We structure our engagements with built-in flexibility for iteration. We expect that roughly 30-40% of initial features will either change significantly or be replaced based on what we learn from real users. Our process is designed to make those pivots fast and cost-effective.”

Question 3: “How do you handle technical debt in MVPs?”

Weak answer: “We build everything to production-quality standards from day one to avoid technical debt.”

This sounds responsible but reveals a fundamental misunderstanding of MVP strategy. Building production-quality everything before you’ve validated product-market fit is wasteful.

Strong answer: “We make strategic decisions about where to accept technical debt and where to invest in quality. User-facing features that test core assumptions can be built scrappy. Infrastructure for analytics, deployment, and iteration needs to be solid because you’ll rely on it for learning. We’re explicit about these trade-offs and help you understand the implications.”

Question 4: “What metrics do you track to measure MVP success?”

Weak answer: “We deliver on time, on budget, and according to spec. We measure our success by whether the code works as designed.”

This reveals developers who measure success by delivery, not by business outcomes. Your MVP’s success isn’t defined by whether it works—it’s defined by what you learn from it.

Strong answer: “We help you define success metrics before writing a single line of code. These might include user activation rates, feature engagement, retention, conversion rates, or qualitative feedback themes. We build analytics from day one and structure our development sprints around improving these metrics, not just adding features.”

Question 5: “Can you show me an example of an MVP you built that failed, and what you learned?”

Weak answer: “All our projects are successful. We have a 100% client satisfaction rate.”

This is either dishonest or reveals a team that’s never worked on real MVPs. In the MVP world, many experiments “fail” in the sense that they invalidate hypotheses—but that’s valuable learning, not failure.

Strong answer: “We worked with a startup building a social fitness app. We shipped an MVP in 8 weeks that technically worked perfectly but got almost no user engagement. Through analytics and user interviews, we discovered people didn’t want another social network—they wanted coaching. We helped the founder pivot to a different model, and that became a successful product. The MVP didn’t validate the original idea, but it did its job: it prevented the founder from wasting a year building the wrong thing.”

The Real Cost of MVP App Developers in 2026 (And Why Cheap Always Costs More)

Let’s address the elephant in every founder’s budget spreadsheet: how much should you actually pay MVP app developers?

I’ve seen founders make devastating mistakes on both ends of this spectrum. Some pay $200 per hour for enterprise developers building features they don’t need. Others pay $25 per hour for offshore teams who produce code that has to be completely rewritten.

Here’s what realistic MVP app development actually costs in 2026, based on current market rates and dozens of recent projects:

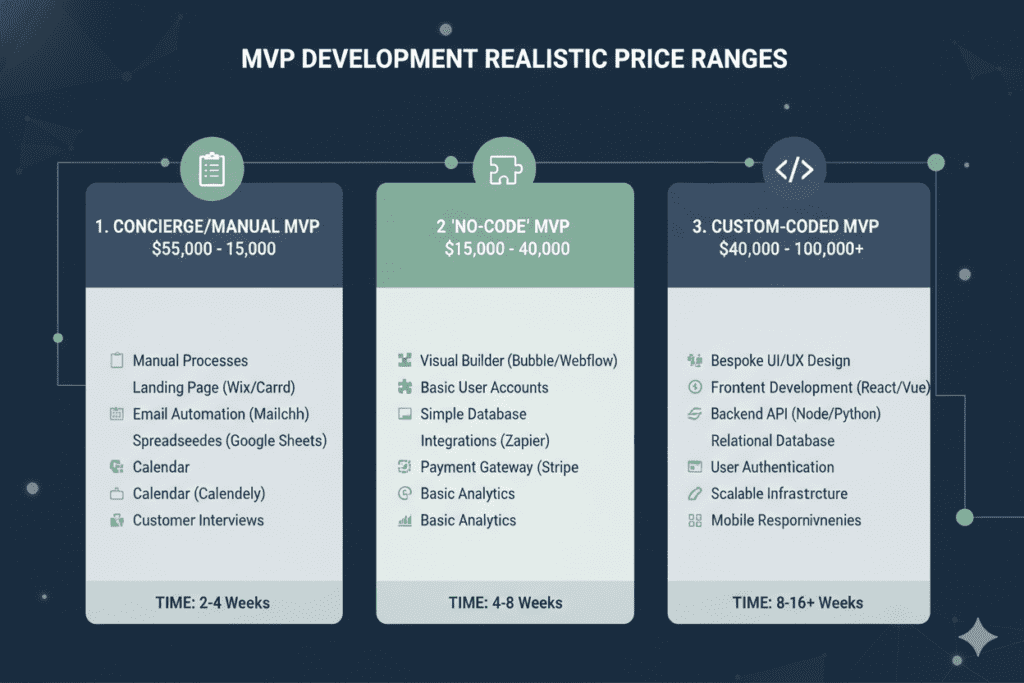

Landing Page + Manual Service MVP: $5,000 – $18,000

Sometimes the right MVP isn’t code at all. Many successful products started as landing pages with Stripe integration and manual service delivery. This validates willingness to pay before you invest in automation.

Timeline: 1-3 weeks What you get: Professional landing page, payment integration, email automation, manual backend processes Best for: Service businesses, marketplaces, content platforms Example: Sarah validated her career coaching platform for $8,000 by manually delivering sessions she’d eventually automate

Simple Web App MVP: $25,000 – $65,000

A functional web application with user accounts, core workflow, database, and analytics. This is your first real product that users can interact with independently.

Timeline: 6-10 weeks What you get: User authentication, 3-5 core features, admin dashboard, responsive design, deployment Best for: SaaS tools, internal business software, community platforms Example: Thomas built his project management MVP for $42,000 and validated demand with 200 paying beta users

Mobile App MVP (Cross-Platform): $45,000 – $95,000

A native or cross-platform mobile app with backend API, push notifications, and app store deployment. Using React Native or Flutter instead of separate native apps cuts costs significantly.

Timeline: 8-14 weeks What you get: iOS + Android apps, backend API, user accounts, push notifications, analytics, app store submission Best for: Consumer apps, mobile-first services, location-based products Example: Lisa’s food delivery MVP cost $68,000 using React Native, versus $140,000 quotes for separate native apps

Marketplace or Multi-Sided Platform: $65,000 – $150,000

Platforms connecting different user types (buyers/sellers, clients/providers) with matching, messaging, payments, and reviews.

Timeline: 12-18 weeks What you get: Separate experiences for each user type, search/matching, in-app messaging, payment processing, rating system Best for: Freelance platforms, rental marketplaces, service marketplaces Example: David’s freelancer platform MVP was $87,000 and reached profitability in 5 months

These numbers might seem high if you’ve seen $5,000 MVP quotes online. Here’s why those ultra-cheap options almost always fail:

A $5,000 MVP typically means one of three things: (1) offshore developers who don’t understand your market or business context, (2) junior developers learning on your dime, or (3) agencies that will nickel-and-dime you with change orders until the total cost exceeds what quality developers would have charged upfront.

Rachel experienced this firsthand. She hired a $12,000 development team for her healthcare app MVP. “The initial quote was incredibly cheap,” she explained. “But they didn’t include user authentication. Or payment processing. Or the admin dashboard. Or proper analytics. Every time I asked about something that seemed obviously necessary, it was an extra charge. I ended up paying $68,000 for an inferior product that I eventually had to rebuild entirely with different MVP app developers who charged $55,000 but delivered something actually usable.”

Red Flags That Scream “Wrong MVP App Developers” (Ignore These At Your Own Risk)

Some warning signs aren’t obvious until you’re months into a failing engagement. But others are visible in initial conversations—if you know what to look for.

Here are the red flags that should make you walk away, no matter how impressive the portfolio or how attractive the pricing:

Red Flag #1: They Quote Without Understanding Your Business Model

If MVP app developers can give you a detailed quote after a 30-minute call where they barely asked about your business model, your target users, or your core assumptions, they’re not actually listening. They’re pattern-matching your request to their standard offerings.

Quality MVP app developers should push back. They should ask uncomfortable questions. They should make you explain why you think certain features matter. The quote should come after they understand your business, not before.

Red Flag #2: They Don’t Ask About Your Validation Strategy

If potential MVP app developers never ask how you plan to test your MVP, how you’ll measure success, or what you’ll do with the learnings, they see themselves as order-takers, not strategic partners.

The conversation should include: What’s your riskiest assumption? How will you validate it? What metrics will indicate success or failure? What’s your plan for user acquisition? How will you gather feedback?

If these topics never come up, you’re talking to developers, not MVP app developers.

Red Flag #3: Their Portfolio Shows Only Polished, Feature-Complete Products

Beautiful portfolio apps with dozens of features aren’t necessarily a good sign for MVP work. They might indicate a team that builds complete products for established companies, not lean experiments for startups.

Look for portfolios that show evolution: “Here’s version 1 with 3 features, here’s what we learned, here’s version 2 with different features based on user feedback.” That story demonstrates understanding of the MVP process.

Red Flag #4: They Promise Unrealistic Timelines

“We can build your full-featured marketplace app in 4 weeks” is almost always a lie or a severe misunderstanding of scope. Quality MVP app developers set realistic expectations.

For reference: A simple MVP takes 6-10 weeks minimum. A complex MVP might take 12-18 weeks. Anyone promising dramatically faster delivery is either cutting critical corners or planning to deliver something far less functional than you’re imagining.

Red Flag #5: They Don’t Discuss Post-Launch Support

Your MVP launch isn’t the finish line—it’s the starting line for learning. If MVP app developers treat handoff as the end of the project, they don’t understand the iterative nature of product development.

Strong teams discuss: How will we monitor performance after launch? Who handles bug fixes? How do we prioritize next features based on user data? What’s the process for rapid iterations?

Kyle avoided a disaster by catching this red flag early: “I was close to signing with a team that seemed perfect—great portfolio, reasonable pricing, good communication. Then I asked about post-launch support and they said, ‘We deliver the code, then we’re done. You’ll need to hire another team for iterations.’ That told me everything I needed to know. They were building a deliverable, not a learning platform.”

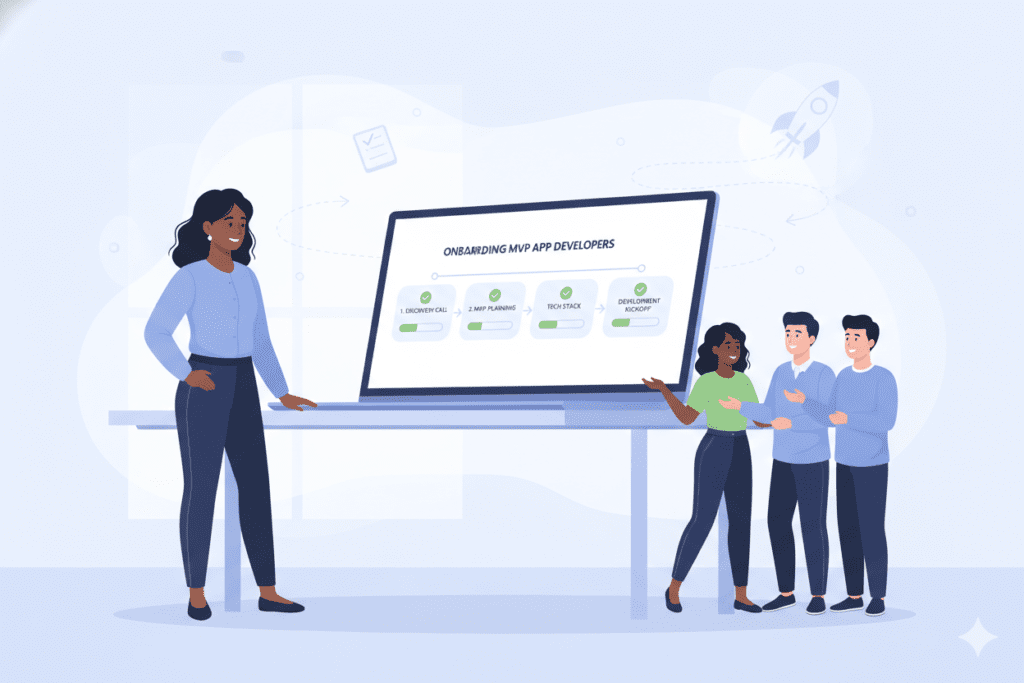

How BkAbhi Approaches MVP App Development Differently: A Founder-First Philosophy

At BkAbhi, we’ve built our entire approach to MVP app development around a simple principle: your success is measured by validated learning, not lines of code delivered.

This philosophy shapes everything about how we work with founders, from initial conversations through launch and beyond.

Week 1-2: Assumption Mapping and Risk Prioritization

We don’t start by discussing tech stacks or feature lists. We start by interrogating your business model and identifying what could kill your startup if you’re wrong about it.

We use a framework called the Assumption Risk Matrix that helps us identify which hypotheses are most dangerous. Not all assumptions matter equally. The assumption that “project managers want better task management” is low-risk and well-validated. The assumption that “they’ll pay $297/month for it” is high-risk and needs immediate testing.

During these first two weeks, we help you conduct proper customer development interviews. We teach you to listen for problem intensity, not feature requests. We help you understand the actual job customers are hiring your product to do.

This discovery phase isn’t billable time we’re spending instead of coding—it’s an investment that typically saves 3-6 months of building the wrong features. We’d rather spend two weeks getting clarity than six months building beautifully engineered solutions to problems that don’t actually matter.

Week 3-8: Build Only What Tests Your Riskiest Assumption

Once we’ve identified your highest-risk assumptions, we design the absolute leanest test to validate or invalidate them. This is where our experience with dozens of MVPs creates real value—we know exactly which corners can be cut without compromising learning.

Sometimes the right MVP isn’t custom software. We’ve helped founders validate business models through Stripe-integrated landing pages, manual concierge services, or wizard-of-oz MVPs where users think they’re interacting with automation but it’s actually the founder behind the curtain.

When custom code is necessary, we build for learning, not for scale. We use battle-tested technology stacks that enable rapid iteration. We ruthlessly descope features that don’t directly test your hypothesis. We deploy early and measure constantly.

Week 9-12: Measure, Learn, Decide

This is where many traditional developers drop the ball—they built what you asked for, delivered the code, and they’re done. But we believe this phase is where the real value emerges.

We build measurement capabilities from day one. Every BkAbhi MVP includes event tracking, user analytics, and structured feedback mechanisms. We don’t just track whether features work—we track whether they’re solving real problems for real users.

Then we help you make the hard decisions. Do the metrics suggest you’re on the right track? Time to iterate and improve. Do they suggest your initial hypothesis was wrong? We help you pivot to a new approach without losing momentum or burning your remaining runway.

The Post-Launch Partnership

We structure our engagements with built-in flexibility for the iteration phase that follows launch. This isn’t about change orders or scope creep—it’s about acknowledging that the point of an MVP is to learn, and learning often means changing direction.

We’ve seen too many founders stuck in rigid contracts that make pivots prohibitively expensive. Our approach includes built-in pivot capacity so you can act quickly on what you learn.

Real Success Stories: How the Right MVP App Developers Transform Outcomes

Let me share three recent examples of how choosing the right MVP app developers—whether that’s BkAbhi or another quality team—made the difference between success and expensive failure:

Case Study: SaaS Founder Saves $180,000 By Starting With a Manual MVP

Amanda came to us with a comprehensive specification for an AI-powered content scheduling tool. Her initial quotes from traditional agencies ranged from $220,000 to $340,000 with 12-18 month timelines.

Through our discovery process, we identified her core assumption: social media managers would pay for AI-generated content suggestions. Everything else—the scheduling, the analytics, the team collaboration features—was secondary to validating that core value proposition.

Instead of building the full platform, we helped Amanda create a $15,000 concierge MVP: a simple form where users submitted their brand guidelines, and Amanda personally generated content suggestions using existing AI tools and delivered them via email.

She processed 40 paying customers in the first month at $97 each. More importantly, she learned that customers actually wanted content calendars more than AI suggestions—a critical insight that completely changed her roadmap.

Only after validating demand and understanding what customers truly valued did we invest in building custom automation. Her final MVP cost $52,000 and was generating $28,000 in monthly recurring revenue within four months. By starting with proper MVP app developers who prioritized learning over building, she saved over $180,000 and gained six months of market advantage.

Case Study: Mobile App Founder Reaches Break-Even in 6 Months

Kevin had a vision for a location-based social discovery app. Traditional mobile development shops quoted him $140,000-$180,000 for native iOS and Android apps.

We challenged his assumption that he needed both platforms from day one. Through user interviews, we discovered his target demographic (urban professionals aged 25-35) were 73% iPhone users.

We built a React Native MVP focusing solely on iOS for the initial launch, investing $58,000 instead of $140,000. This allowed Kevin to launch 3 months earlier, test his core assumptions with real users, and iterate based on feedback.

The data from his iOS MVP completely changed his Android priorities. Features he’d planned to build first based on assumptions turned out to be rarely used. Features he’d deprioritized became the most-requested additions.

When we eventually built the Android version, it was informed by data from 3,000 active iOS users. The Android app cost $35,000 instead of the originally quoted $70,000 because we knew exactly what mattered. Total investment: $93,000. Time to break-even: 6 months. Revenue in month 12: $47,000/month.

Case Study: Marketplace Platform Pivots Successfully Without Burning the Budget

Sophie built a marketplace connecting pet owners with dog walkers. Her initial MVP cost $78,000 and launched with standard marketplace features: profiles, booking, payments, reviews.

After three months, she had 200 registered dog walkers but only 15 active pet owners—the opposite problem she’d expected. Traditional MVP app developers would have required new contracts and change orders to address this. Because Sophie chose a development partner who understood startup realities, her team had pivot capacity built in.

Through user research, we discovered the real problem: pet owners didn’t trust strangers with their pets based on profile photos and reviews. They wanted video introductions and background checks.

We pivoted the platform to focus on trust-building features: video profiles, verification badges, trial walk offers. This required scrapping some initially built features and redirecting that budget to new priorities.

The pivot cost $18,000—already budgeted in contingency—instead of the $55,000 new-contract approach other agencies quoted. Within two months of the pivot, active pet owner registrations increased 340%. Sophie’s platform is now processing $180,000 in monthly transaction volume.

The lesson from all three stories? The right MVP app developers don’t just build what you initially ask for. They help you learn what you should actually build, and they make iteration fast and cost-effective when learning changes your direction.

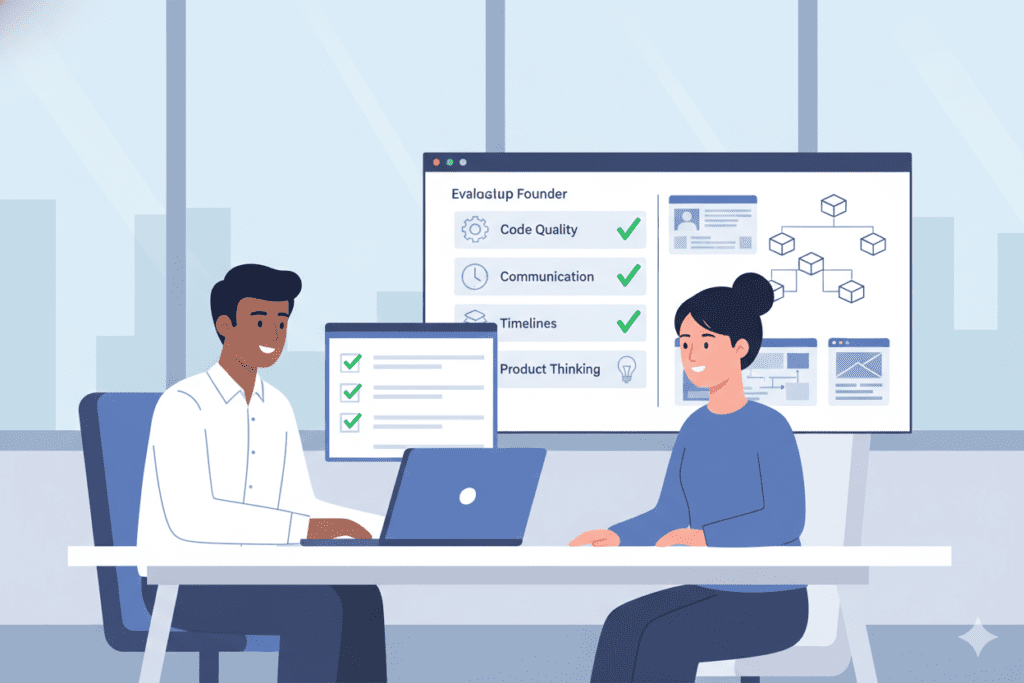

How to Evaluate MVP App Developers: The Practical Interview Framework

You’ve learned the red flags and green flags. Now here’s a practical framework for actually evaluating potential MVP app developers and making a confident decision.

Step 1: The Discovery Call Assessment (Week 1)

Your first conversation with potential MVP app developers reveals everything you need to know about how they think about product development.

Schedule 60-minute discovery calls with 3-5 potential teams. Don’t make these sales calls—make them working sessions. Come prepared with your business model, target users, and core assumptions.

Evaluate the conversation on these dimensions:

- How much time did they spend asking questions versus talking about their capabilities? (You want 60%+ questions)

- Did they challenge any of your assumptions or just nod along? (You want pushback)

- Could they articulate your core business risk better than you could after the conversation? (This shows they understood)

- Did they propose any alternative approaches you hadn’t considered? (This shows strategic thinking)

Take detailed notes. The team that asks the hardest questions and makes you think differently about your problem is often the right choice.

Step 2: The Technical Approach Review (Week 2)

Ask the finalists to provide a lightweight technical approach document (2-3 pages, not 40 pages). This should outline:

- What they believe your riskiest assumption is

- The absolute minimum features needed to test it

- Proposed technology stack with rationale

- Timeline broken into learning milestones, not just deliverables

- Success metrics they’d track

Pay attention to what they propose cutting from your feature list. Good MVP app developers should recommend building less than you initially wanted, not more.

Step 3: The Reference Deep Dive (Week 2-3)

Don’t just ask for references—ask the right questions when you call them.

Questions to ask references:

- “Tell me about a time when initial assumptions proved wrong. How did the team handle the pivot?”

- “What was the ratio of features they recommended building versus features you initially wanted?” (You want them cutting features)

- “How did they measure success? What metrics did they prioritize?”

- “Would you hire them again for your next MVP?” (Only yes/no, no qualifications, counts)

One founder gave me the best reference question I’ve ever heard: “If you were starting over with the knowledge you have now, what would you do differently in your engagement with them?” The answer reveals both strengths and weaknesses honestly.

Step 4: The Chemistry Test (Week 3)

You’ll be working closely with your MVP app developers for 3-6 months minimum. Chemistry matters more than technical skills beyond a baseline competency level.

Ask yourself:

- Do I trust them to tell me when I’m wrong?

- Do they explain technical concepts in ways I understand?

- Do they seem energized by the problem I’m solving?

- Can I imagine calling them at 10 PM with an urgent question?

If any answer is no, keep looking. Technical execution matters, but partnership quality determines whether you’ll successfully navigate the inevitable challenges ahead.

Step 5: The Contract Clarity Assessment (Week 4)

Read the proposed contract carefully. Red flags include:

- Fixed-scope contracts with no flexibility for learning-based changes

- Hourly billing with no caps or estimates

- Ownership clauses that don’t give you full rights to the code and IP

- No defined process for handling disagreements or changes

- Vague deliverables that aren’t measurable

Green flags include:

- Milestone-based payments tied to learning objectives, not just features delivered

- Clearly defined pivot or iteration capacity

- Transparent pricing with no hidden fees

- Exit clauses that allow you to take your code and leave if it’s not working

- Post-launch support explicitly included

Common Mistakes Founders Make When Hiring MVP App Developers (And How to Avoid Them)

Even founders who do extensive research make predictable mistakes when selecting MVP app developers. Here are the most expensive ones:

Mistake #1: Optimizing for the Lowest Price Instead of the Best Value

The cheapest option almost never delivers the most value. A $25,000 MVP that teaches you nothing and has to be rebuilt is more expensive than a $60,000 MVP that validates your business model and sets you up to scale.

Better approach: Optimize for cost of validated learning. Ask: “How much will I pay per validated assumption?” Not: “What’s the absolute minimum I can spend?”

Mistake #2: Choosing Based on Portfolio Impressiveness Rather Than Process Quality

Impressive portfolio companies don’t necessarily mean the team is right for MVP work. A firm that built beautiful apps for Microsoft and Coca-Cola might be terrible at scrappy, iterative startup development.

Better approach: Look for portfolios that show evolution and learning, not just polished final products. Ask to see version 1 and version 2 of their projects with explanations of what changed based on user feedback.

Mistake #3: Not Defining Success Metrics Before Development Starts

If you haven’t defined what success looks like, you can’t hold your MVP app developers accountable or make good decisions about iteration.

Better approach: Work with your developers to define 3-5 key metrics before writing any code. These might be activation rate, feature engagement, retention, conversion, or qualitative feedback themes. Build measurement of these metrics into the MVP from day one.

Mistake #4: Treating the MVP as a Deliverable Rather Than a Learning Platform

Founders who see their MVP as a finished product get disappointed when it needs iteration. Founders who see it as a learning platform celebrate the insights it generates, even when those insights require pivoting.

Better approach: Mentally budget 30-40% extra for iteration based on what you learn. The MVP launch is the beginning of learning, not the end of building.

Mistake #5: Not Protecting Enough Runway for Post-Launch Iteration

Some founders spend 80% of their budget building the MVP, leaving only 20% for marketing, iteration, and operations. Then they can’t act on what they learn because they’re out of money.

Better approach: Follow the 50/30/20 rule—spend 50% on MVP development, reserve 30% for iteration and improvements, keep 20% for operations and user acquisition. This ensures you have the capital to act on validated learning.

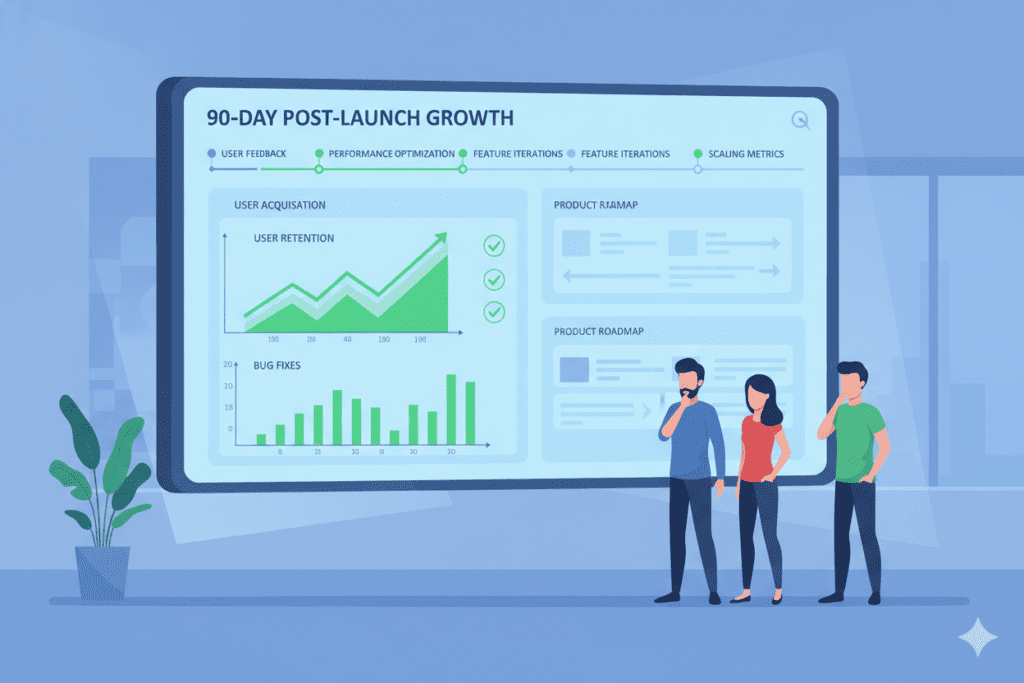

What Happens After You Launch: The Critical 90 Days with the Right MVP App Developers

Most founders think the relationship with their MVP app developers ends at launch. That’s exactly when the most important work begins.

The 90 days following your MVP launch determine whether your product succeeds or fails. Here’s what should happen during that critical window:

Days 1-30: Intensive Monitoring and Rapid Bug Fixes

Real users do things you never anticipated. Bugs emerge that weren’t caught in testing. User flows break under actual usage patterns.

Quality MVP app developers don’t disappear after launch. They monitor your analytics obsessively during this period, fix critical bugs within hours (not days), and help you distinguish between bugs that need immediate fixes versus features that need rethinking.

At BkAbhi, we treat the first 30 days post-launch as an extension of development, not an afterthought. We include it in our standard engagements because we know this is when the most valuable learning happens.

Days 31-60: Analyzing User Behavior and Identifying Patterns

By week 5-6, you should have enough data to see patterns in user behavior. Which features are being used? Where are users dropping off? What correlates with retention?

Your MVP app developers should be helping you interpret this data and translate it into actionable insights. “42% of users abandon during onboarding” is data. “Users are abandoning because the email verification step is confusing” is insight.

Days 61-90: Strategic Iteration Planning

Based on what you’ve learned, what should you build next? What should you remove? What should you double down on?

This is where having the right MVP app developers makes the biggest difference. Teams that understand startup dynamics help you make strategic decisions about iteration priorities. They help you resist the temptation to chase every feature request and instead focus on what moves your key metrics.

Many of BkAbhi’s most successful client relationships extend well beyond the initial MVP because founders realize the value of having strategic technical partners who understand their business, their users, and their metrics deeply.

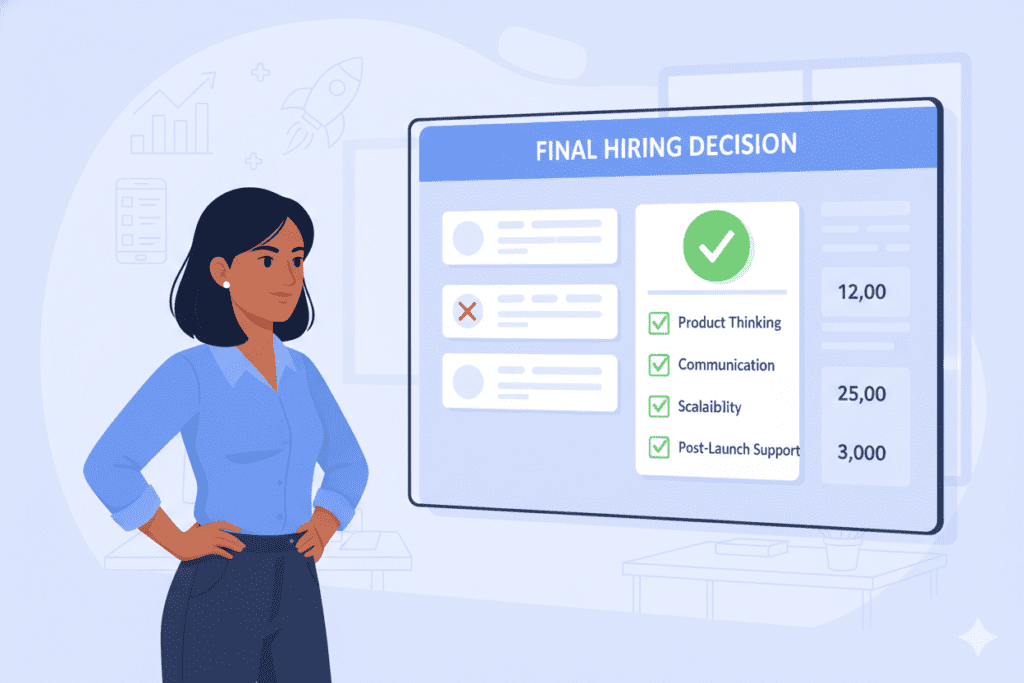

Making Your Decision: A Framework for Choosing MVP App Developers with Confidence

You’ve done the research. You’ve had the calls. You’ve checked the references. Now you need to make a decision.

Here’s a simple framework that’s helped dozens of founders choose confidently:

Score each potential team on a 1-10 scale across these dimensions:

- Strategic Understanding: Do they understand startup dynamics and MVP philosophy? (Weight: 25%)

- Technical Capability: Can they build what’s needed with quality and speed? (Weight: 20%)

- Communication Quality: Are they clear, honest, and proactive? (Weight: 15%)

- Process Maturity: Do they have systems for managing projects effectively? (Weight: 15%)

- Cost Efficiency: Do they deliver value per dollar spent on validated learning? (Weight: 15%)

- Chemistry Fit: Do you enjoy working with them and trust them? (Weight: 10%)

Multiply each score by its weight, add them up. The highest total score is usually the right choice.

But here’s the most important rule: if your gut says no despite a high score, listen to your gut. You’ll be working closely with these people during the most stressful period of your startup journey. Chemistry and trust matter.

The BkAbhi Difference: Why Founders Choose Us for MVP App Development

Let me be direct: there are excellent MVP app developers beyond BkAbhi. Some of them might even be better fits for your specific needs.

But here’s why founders consistently choose us when they’re comparing options:

We’ve Been in Your Shoes

BkAbhi wasn’t started by career agency owners. We’re founders who’ve experienced the pain of building the wrong thing, running out of runway, and scrambling to pivot. We understand the fear, the pressure, and the high stakes because we’ve lived them.

This lived experience shapes everything. We don’t just build what you ask for—we challenge your assumptions because we know how expensive wrong assumptions are. We’re not order-takers; we’re partners who’ve been in your position and want to help you avoid our mistakes.

We Measure Success by Your Learning, Not Our Deliverables

Many development agencies measure success by whether they delivered on time and on budget according to the initial specification. We measure success by whether you learned what you needed to learn to make confident decisions about your product’s future.

Sometimes this means delivering less than initially scoped because we discovered a leaner way to test your assumptions. Sometimes it means pivoting mid-project because early signals suggested a different direction. We’re optimized for your long-term success, not for maximizing our short-term revenue.

We’re Honest Even When It’s Not What You Want to Hear

We’ve turned down projects because we didn’t think the founder’s idea was ready for development. We’ve told potential clients to spend more time on customer interviews instead of jumping into coding. We’ve recommended no-code solutions when custom development wasn’t justified.

Sometimes this costs us projects. But it builds trust with the founders we do work with. If we tell you something is the right approach, you can trust we’re not just saying it to increase our fees.

We Build Relationships, Not Just Products

Our goal isn’t to build your MVP and move on to the next client. It’s to become your long-term technical partner who understands your business deeply and helps you make better product decisions over time.

Many of our best client relationships have lasted years, evolving from MVP development to scaling to building version 2.0. We provide continuity, institutional knowledge, and strategic partnership that fragmented relationships with different agencies can never match.

Here you can also visit all in one ai tool- Aizolo

Your Next Steps: How to Get Started with the Right MVP App Developers

You’ve read this far, which means you’re serious about choosing the right MVP app developers and giving your startup the best possible chance at success.

Here’s what you should do next:

Step 1: Document Your Core Assumptions

Before talking to any development team, spend a few hours writing down what you believe about your customers, your market, your solution, and your business model. Rank these assumptions by risk—which ones, if wrong, would invalidate your entire approach?

This document becomes the foundation for productive conversations with potential MVP app developers. Teams that engage thoughtfully with your assumptions are revealing their strategic